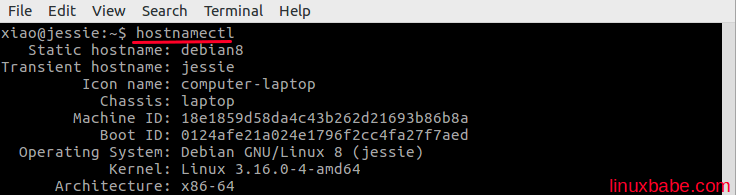

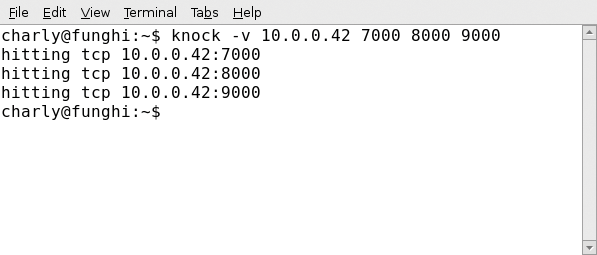

Unfortunately, this is not intended to be general for all platforms but I would happily discuss smarter/better ways to handle distributed training in an issue/PR. This is used to detect the master process, and for now, the only simple way I came up with. When running this server, choose a port that is not already. The server program begins by creating a new ServerSocket object to listen on a specific port (see the statement in bold in the following code segment). Note: In PyTorch, the launch of sets up a RANK environment variable for each process (see here). This section walks through the code that implements the Knock Knock server program, KnockKnockServer. To circumvent that, except for errors, only the master process is allowed to send notifications so that you receive only one notification at the beginning and one notification at the end. Since knockknock works at the process level, if you are using 8 GPUs, you would get 8 notifications at the beginning and 8 notifications at the end. The server and any viewers should update to show the new name after a short while. I also tried hostname newhostname but this was also lost after. I tried echo 'newhostname > /etc/HOSTNAME' but after reboot this also goes. I tried changing the hostname using YaST but after reboot the old name came back.

Click on the pencil icon next to the computer name to edit the name. I want to update the hostname for one of my VMware virtual machines running SUSE Linux Enterprise Server 11 SP3 for VMware. When using distributed training, a GPU is bound to its process using the local rank variable. 1 To edit the names of the computers in your team, go to, sign in with your RealVNC account, and go to the 'Computers' page. You can also specify an optional argument to tag specific people: user-mentions= and/or user-mentions-mobile=.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed